Modern digital systems generate an overwhelming volume of data every second, from application events and server metrics to user interactions and security alerts. Organizations need efficient ways to collect, analyze, and interpret this data in real time. Log aggregation tools like Datadog have become essential components in modern observability strategies, enabling teams to monitor infrastructure, detect anomalies, and respond to incidents proactively. By centralizing logs and metrics into a unified platform, these tools help engineering and operations teams maintain performance, reliability, and security.

TL;DR: Log aggregation tools such as Datadog centralize and analyze logs and metrics from multiple systems in real time. They help organizations monitor performance, detect anomalies, and resolve incidents faster. These platforms combine dashboards, alerts, and analytics for full observability. Choosing the right tool depends on scalability, integrations, pricing, and business needs.

Understanding Log Aggregation and Metrics Monitoring

At its core, log aggregation refers to the process of collecting log data from multiple systems, applications, and devices into a centralized repository. Logs often include server events, application errors, authentication attempts, API calls, and more. Without aggregation, teams would need to manually access individual systems to review logs, which is inefficient and time-consuming.

Metrics monitoring, on the other hand, focuses on quantitative measurements such as CPU usage, memory consumption, request latency, error rates, or network throughput. While logs provide detailed event-level context, metrics offer high-level visibility into system health.

Together, logs and metrics provide a complete observability framework. Log aggregation tools unify this data to enable:

- Real-time monitoring of infrastructure and applications

- Automated alerting when anomalies occur

- Root cause analysis during outages

- Security investigation and compliance reporting

Why Businesses Rely on Tools Like Datadog

Datadog is often considered a leading solution in the observability space due to its cloud-native design and wide integration capabilities. However, it is not the only player. Businesses choose log aggregation platforms to overcome challenges such as distributed systems, hybrid cloud environments, and microservices architectures.

Key benefits of platforms like Datadog include:

1. Centralized Data Visibility

Rather than analyzing logs across multiple servers individually, teams access all relevant data from a single dashboard. This dramatically reduces investigation time and improves collaboration across departments.

2. Real-Time Alerts

Advanced alerting features allow organizations to define thresholds and receive notifications through email, Slack, PagerDuty, or other integrations. This ensures that incidents are addressed before they escalate into major outages.

3. Scalable Cloud Architecture

Modern enterprises often operate across multi-cloud environments. Tools like Datadog scale dynamically to accommodate millions of logs per second without degrading performance.

4. Powerful Search and Filtering

Structured log indexing enables teams to query data efficiently. Filters can be applied by service, host, region, or custom tags for granular analysis.

Core Features to Look for in Log Aggregation Tools

When evaluating platforms for monitoring logs and metrics, organizations typically consider several core capabilities:

- Data ingestion flexibility – Support for multiple log sources, APIs, and agents

- Indexing and retention controls – Custom retention policies for cost management

- Visualization dashboards – Interactive graphs and customizable views

- Machine learning insights – Anomaly detection and predictive analysis

- Security monitoring – Threat detection and compliance reporting

- Integration ecosystem – Compatibility with cloud providers, CI/CD tools, and databases

Popular Log Aggregation Tools Compared

While Datadog is highly popular, several alternatives serve different use cases and budgets. Below is a comparison chart of leading log aggregation and monitoring tools.

| Tool | Strengths | Best For | Pricing Model | Cloud Native |

|---|---|---|---|---|

| Datadog | Extensive integrations, unified logs and metrics, strong dashboards | Large and mid-size cloud environments | Usage-based | Yes |

| Splunk | Powerful search capabilities, enterprise security features | Large enterprises and security teams | Data ingestion-based | Yes |

| ELK Stack (Elasticsearch, Logstash, Kibana) | Open source flexibility, customizable dashboards | Technical teams with DevOps expertise | Free and paid tiers | Partially |

| New Relic | Application performance monitoring, unified observability | Application-centric monitoring | Usage-based | Yes |

| Graylog | Centralized logging, open-source core | Small to mid-size teams | Free and enterprise plans | Partially |

Use Cases Across Industries

Log aggregation tools are valuable across numerous industries. Their applications extend far beyond basic server monitoring.

E-Commerce Platforms

Online retailers depend heavily on uptime and performance. Monitoring transaction logs, user activity, and server metrics ensures seamless customer experiences, especially during peak traffic events.

Financial Services

Banks and fintech companies use log aggregation to monitor transactions, detect fraudulent activities, and comply with strict regulatory standards. Detailed logging is essential for audits and investigations.

Healthcare Systems

Healthcare providers rely on monitoring tools to safeguard patient data, ensure availability of electronic health record systems, and maintain HIPAA compliance.

Software Development Teams

Development teams integrate log monitoring into CI/CD pipelines. This enables rapid detection of deployment failures and ensures efficient debugging during software releases.

The Role of Automation and AI

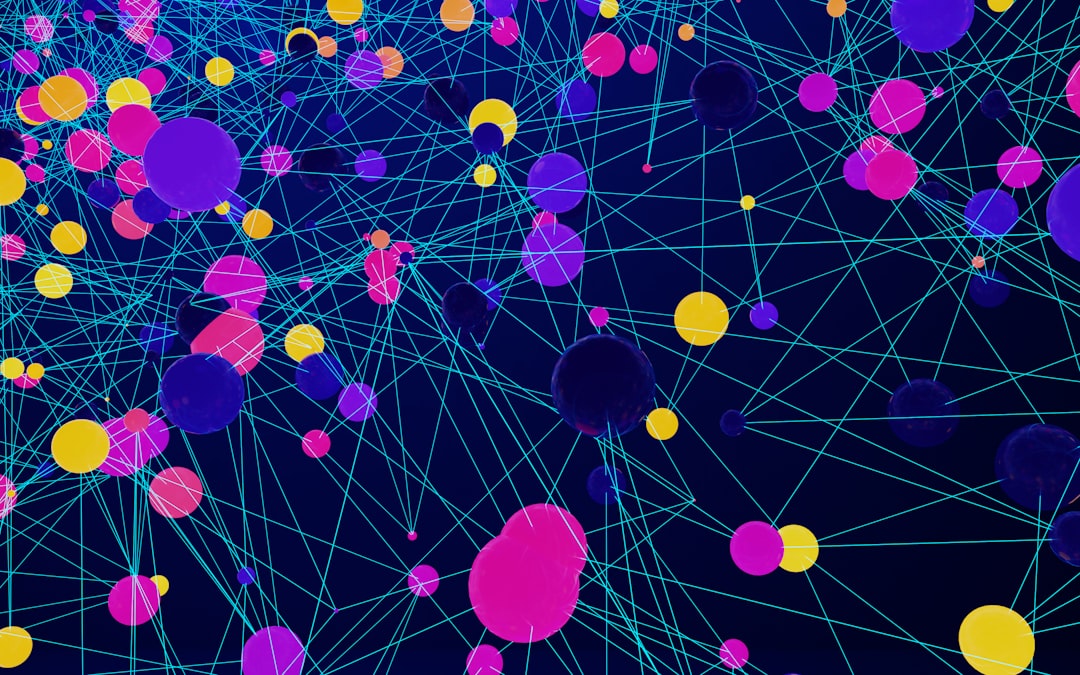

Modern log aggregation platforms increasingly incorporate artificial intelligence and machine learning to automate analysis. Instead of relying solely on predefined rules, AI systems examine behavioral patterns and identify anomalies automatically.

For example, if a server’s typical response time is 200 milliseconds, a sudden increase to 400 milliseconds might trigger an alert—especially if the deviation falls outside historical norms. These capabilities significantly reduce manual monitoring effort.

- Anomaly detection for unusual patterns

- Root cause suggestions based on historical incidents

- Predictive scaling insights for capacity planning

Challenges in Log Aggregation

While log aggregation tools provide significant advantages, they are not without challenges:

- Cost management: High log ingestion volumes can drive up expenses.

- Data noise: Excessive logging without filtering can clutter dashboards.

- Retention policies: Balancing compliance requirements with storage costs.

- Integration complexity: Connecting legacy systems may require customization.

Organizations often mitigate these issues by implementing structured logging practices and filtering unnecessary logs before ingestion.

Best Practices for Implementing Log Monitoring

To maximize the value of log aggregation platforms, companies follow several best practices:

- Define clear objectives: Determine whether the focus is performance monitoring, security auditing, or compliance.

- Standardize log formats: Use structured logging such as JSON for easier parsing.

- Set meaningful alerts: Avoid alert fatigue by fine-tuning thresholds.

- Review dashboards regularly: Keep visualizations aligned with business priorities.

- Monitor costs: Track ingestion volumes and adjust retention as needed.

The Future of Log Aggregation

The future of observability is moving toward unified telemetry, combining logs, metrics, traces, and events into a single monitoring ecosystem. OpenTelemetry standards are simplifying data collection, while cloud-native architectures continue to shape monitoring requirements.

Platforms like Datadog are investing heavily in automation, security analytics, and deeper AI integration. As infrastructure becomes more distributed, especially with edge computing and serverless functions, log aggregation tools will become even more critical for maintaining transparency and control.

FAQ

1. What is log aggregation?

Log aggregation is the process of collecting and centralizing logs from various systems, applications, and devices into one platform for easier analysis and monitoring.

2. How does Datadog differ from traditional monitoring tools?

Datadog is cloud-native and integrates logs, metrics, and traces into a unified platform with real-time dashboards and AI-driven insights, unlike older tools that often operate in silos.

3. Are open-source log aggregation tools effective?

Yes, open-source platforms like ELK Stack and Graylog are powerful and flexible. However, they may require more setup and maintenance compared to managed solutions.

4. What factors influence the cost of log monitoring?

Costs typically depend on data ingestion volume, storage duration, the number of hosts monitored, and additional features such as security analytics or advanced AI capabilities.

5. Can log aggregation improve security?

Absolutely. Centralized logging helps detect suspicious activity, monitor failed login attempts, analyze access patterns, and support compliance audits.

6. Is log aggregation necessary for small businesses?

While not always mandatory, even small businesses benefit from centralized logging as they scale. It simplifies troubleshooting and ensures proactive monitoring as complexity increases.

In an era of distributed applications and cloud-first infrastructures, log aggregation tools like Datadog have become indispensable. By unifying logs and metrics into actionable insights, they empower organizations to maintain resilience, optimize performance, and stay ahead of potential disruptions.