The internet is a busy place. Bots roam everywhere. Some are helpful. Some are harmful. To control the chaos, websites use anti-bot systems. At the same time, companies build proxy infrastructure software to manage traffic, testing, and automation. This article explains how these systems work, why detection happens, and what responsible teams should know.

TLDR: Anti-bot systems watch for patterns that look automated. Proxy infrastructure software helps manage traffic and identity online. There is a constant cat-and-mouse game between detection systems and traffic management tools. The key is to understand how it all works and to use these tools responsibly and legally.

Let’s break it down in simple terms.

What Is Anti-Bot Detection?

Websites do not like fake traffic. It wastes resources. It can scrape data. It can overload servers. So they use smart tools to detect automation.

Anti-bot systems look at:

- IP reputation

- Browser fingerprints

- Mouse movements

- Typing speed

- Request patterns

- Header consistency

If behavior looks unnatural, access can be slowed down or blocked.

Think of it like a security guard at a mall. If someone walks in calmly, no problem. If someone sprints through every store grabbing things, alarms go off.

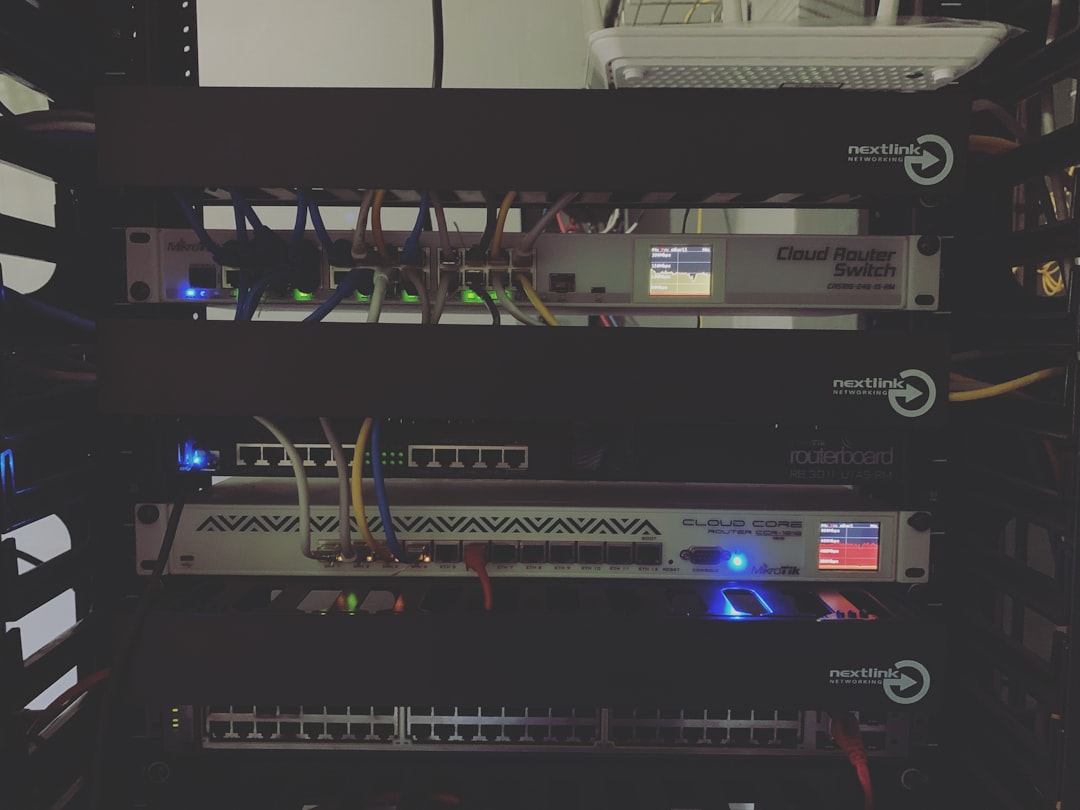

What Is Proxy Infrastructure Software?

Proxy infrastructure software routes traffic through intermediary servers. Instead of connecting directly, traffic passes through a proxy.

This can help:

- Balance traffic load

- Test applications globally

- Improve anonymity for security testing

- Monitor performance across regions

Proxies act like middlemen. They stand between a user and a website.

Used properly, proxy systems are powerful tools for businesses. Used improperly, they can trigger anti-bot systems quickly.

Why Detection Happens

Anti-bot systems detect automation because automated systems behave differently than humans.

Here are some general signals that raise flags:

- Repeated high-speed requests

- Large volumes of identical traffic

- Connections from flagged IP ranges

- Unusual geographic patterns

- Mismatched device and browser information

Detection is not random. It is statistical. It looks for anomalies.

Websites train machine learning models to find these anomalies faster. The models get smarter every year.

The Cat-and-Mouse Dynamic

The relationship between automation tools and anti-bot systems is dynamic.

When detection improves, traffic management tools adapt. When traffic tools evolve, detection systems tighten again.

However, there is an important line here.

Ethical and legal boundaries matter.

Companies that build proxy infrastructures for legitimate uses focus on compliance, permission-based testing, and transparent operations.

Common Types of Proxy Infrastructure Software

There are several categories of proxy tools used in enterprise environments.

1. Datacenter Proxies

- Fast and affordable

- Hosted in data centers

- Easier to detect due to IP reputation

2. Residential Proxies

- Use IP addresses assigned to homes

- Appear more like real users

- More expensive

3. Mobile Proxies

- Use mobile carrier networks

- Often rotate IPs naturally

- Common in app testing

4. Rotating Proxy Networks

- Automatically rotate IP addresses

- Designed for distributed traffic

- Need careful compliance management

Here is a simple comparison chart:

| Proxy Type | Speed | Cost | Detection Risk | Common Use |

|---|---|---|---|---|

| Datacenter | High | Low | High | Load testing |

| Residential | Medium | High | Lower | Geo testing |

| Mobile | Medium | Very High | Lower | App testing |

| Rotating Network | Variable | Variable | Depends on setup | Traffic distribution |

How Modern Anti-Bot Systems Think

Modern detection systems act like investigators. They combine many clues.

They look at:

- Behavioral biometrics – How the cursor moves

- Timing patterns – Human pauses vs automated speed

- Device fingerprint consistency

- Historical traffic analysis

- ASN and network reputation

The key word is correlation. A single odd behavior might pass. Many signals together trigger action.

Responsible Techniques for Reducing False Positives

Now here is the important part.

If you are running legitimate automation—like performance testing or API integration—you want to avoid unnecessary blocking. But you must stay ethical and legal.

Best practices include:

- Requesting API access when available

- Following robots.txt guidelines

- Rate-limiting your own traffic

- Being transparent with websites during testing

- Using compliance-verified proxy providers

This approach is not about “beating” detection. It is about cooperating with systems while achieving business goals.

Infrastructure Design Matters

Enterprise proxy infrastructure should focus on:

- Scalability

- Redundancy

- Monitoring

- Compliance logs

- IP hygiene management

A strong system tracks its own behavior. It knows when request volume spikes. It alerts teams before problems grow.

Transparency reduces risk.

The Role of Machine Learning

Both sides use machine learning.

Anti-bot systems use it for:

- Anomaly detection

- Threat scoring

- Real-time blocking

Proxy management tools use it for:

- Traffic optimization

- Load balancing

- Network health prediction

It is like two smart chess players studying each other.

Legal and Ethical Considerations

This topic is exciting. But it is also sensitive.

Bypassing safeguards on websites without permission can:

- Violate terms of service

- Break computer misuse laws

- Trigger account bans

- Lead to legal penalties

Organizations should always:

- Consult legal teams

- Use contracts for data access

- Respect privacy regulations

- Document automation purposes

There is a big difference between testing your own system and interfering with someone else’s.

The Future of Anti-Bot Technology

Detection is becoming more human-aware.

Expect more:

- Behavioral AI scoring

- Continuous authentication

- Device attestation systems

- Stronger identity verification

At the same time, infrastructure software will become:

- More distributed

- More cloud-native

- More AI-managed

- More compliance-driven

The future is not just about bypassing systems. It is about building smarter and safer automation.

Final Thoughts

Anti-bot systems exist for a reason. They protect websites, users, and data.

Proxy infrastructure software also has a purpose. It enables testing, research, and distributed systems.

The space between them is where innovation happens.

But innovation should always stay ethical.

Short version? Understand how detection works. Build clean infrastructure. Follow the rules. And remember that the smartest engineers focus on sustainability, not shortcuts.

The internet works best when both security and automation evolve together.