APIs are the highways of the internet. They move data between apps, services, and users every second. But what happens when traffic explodes? Everything slows down. Or worse, it crashes. That’s where API rate limiting platforms like NGINX step in to save the day.

TLDR: API rate limiting controls how many requests users or systems can send within a set time. Tools like NGINX help prevent overload, improve security, and keep services stable. They act like traffic cops for your APIs. Without rate limiting, your API can be abused, overwhelmed, or taken offline.

Let’s break it down in a simple way. No jargon overload. Just clear ideas and real-world examples.

What Is API Rate Limiting?

Imagine a coffee shop. One barista. Ten customers. That’s manageable.

Now imagine 10,000 customers rush in at once. Chaos.

API rate limiting sets rules. It says:

- How many requests are allowed

- Who can make them

- How often they can be made

For example:

- 100 requests per minute per user

- 1,000 requests per hour per API key

- 10 login attempts per 5 minutes per IP

When the limit is hit, the system responds with a friendly but firm message. Usually a 429 Too Many Requests error.

This keeps everything fair. And stable.

Why API Rate Limiting Matters

APIs power mobile apps, websites, IoT devices, and more. If they fail, everything fails.

Rate limiting helps by:

- Preventing server overload

- Blocking brute force attacks

- Stopping API abuse

- Ensuring fair usage

- Controlling costs in cloud environments

Without limits, one bad actor could consume all your resources.

And here’s the thing. Not all traffic spikes are attacks. Sometimes your app goes viral. Congrats! But you still need control.

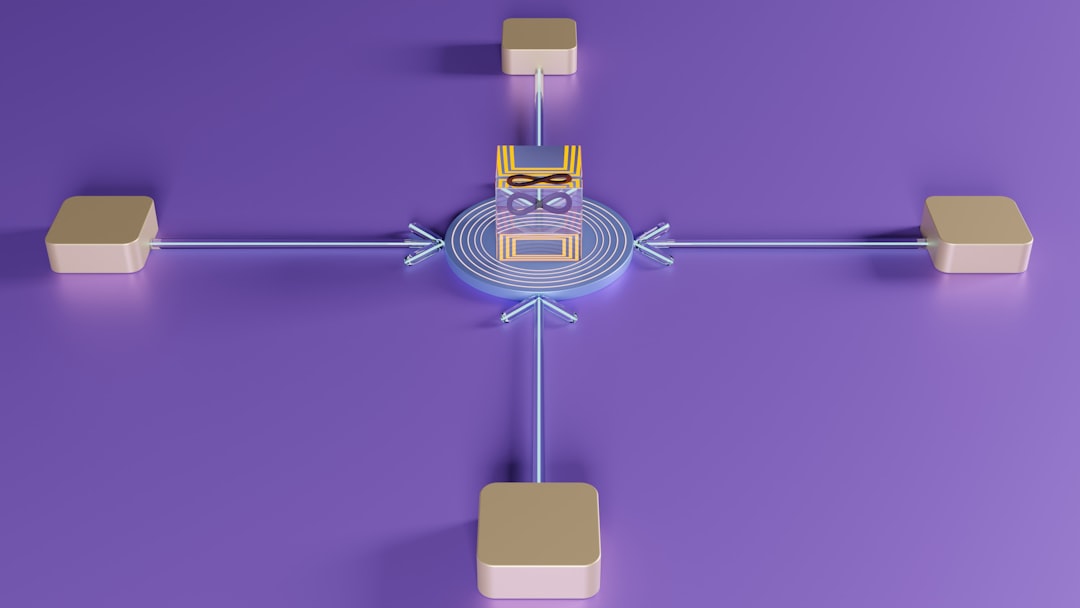

Meet NGINX: The Traffic Controller

NGINX started as a high-performance web server. Today, it’s also a reverse proxy, load balancer, and API gateway.

And yes. It’s amazing at rate limiting.

Think of NGINX as a vigilant gatekeeper. It watches every request. It counts them. It decides what gets through.

How NGINX Rate Limiting Works

NGINX uses something called the leaky bucket algorithm. Sounds funny. Works brilliantly.

Picture a bucket with a small hole at the bottom.

- Requests pour in from the top.

- They leak out at a steady rate.

- If too many requests arrive at once, the bucket overflows.

- Overflowing requests are delayed or rejected.

This creates smooth traffic flow.

Even during sudden spikes.

A Simple Configuration Example

With NGINX, rate limiting is configured in just a few lines:

- Define a limit zone (who and how much to track)

- Set request rates

- Apply it to specific endpoints

You can limit:

- By IP address

- By user token

- By API key

- By custom headers

Flexible. Powerful. Efficient.

Hard Limits vs Soft Limits

There are different flavors of rate limiting.

1. Hard Limits

- Once the limit is hit, requests are blocked immediately.

- No exceptions.

2. Soft Limits

- Requests may be delayed instead of rejected.

- Allows minor bursts.

NGINX supports burst configurations. This means users can briefly exceed the limit without instant rejection.

It’s like letting a few extra customers into the store before locking the door.

Rate Limiting Algorithms Explained Simply

Different systems use different logic to control traffic.

Here are the most common ones:

- Fixed Window – 100 requests per minute. Resets every minute.

- Sliding Window – Tracks requests over a rolling time period.

- Token Bucket – Tokens are added steadily. Each request uses a token.

- Leaky Bucket – Smooths traffic by releasing requests at a fixed rate.

NGINX primarily uses the leaky bucket model.

It’s simple. Predictable. Reliable.

Real-World Use Cases

Rate limiting isn’t just theory. It’s everywhere.

1. Login Protection

Limit login attempts to prevent brute force attacks.

2. Public APIs

Offer free tier users 500 requests per day. Paid users get more.

3. E-commerce Flash Sales

Prevent bots from buying all the inventory.

4. Microservices Communication

Prevent one service from overwhelming another.

Without rate limiting, one runaway process could crash your entire system.

NGINX vs Other API Rate Limiting Platforms

NGINX is powerful. But it’s not alone.

Here’s a comparison of popular API rate limiting platforms:

| Platform | Type | Strengths | Best For |

|---|---|---|---|

| NGINX | Web server and API gateway | High performance, flexible config, lightweight | Custom setups and high traffic systems |

| Kong | API gateway | Plugin ecosystem, easy extensions | Microservices and plugin driven setups |

| Apigee | Full API management platform | Analytics, monetization tools | Enterprise API programs |

| AWS API Gateway | Cloud managed gateway | Fully managed, auto scaling | AWS cloud environments |

Quick takeaway: If you want lightweight, fast, and highly customizable rate limiting, NGINX is a top choice. If you want a fully managed enterprise suite, other tools may fit better.

How Rate Limiting Improves Security

Security is a huge reason to use rate limiting.

Here’s how it helps:

- Stops brute force password attacks

- Prevents credential stuffing

- Mitigates DDoS attacks

- Slows scraping bots

Think of it as reducing attack speed. Even if attackers try, they’re slowed down.

And slower attacks are easier to detect and block.

NGINX can also combine rate limiting with:

- IP blacklisting

- Geo filtering

- Web application firewalls

Layered security is smart security.

Performance Benefits

Here’s something counterintuitive.

Limiting traffic can make your system feel faster.

Why?

Because controlled traffic prevents bottlenecks.

Instead of total failure under load, your system:

- Responds predictably

- Drops excess requests gracefully

- Maintains uptime

Users prefer a small error message over a completely broken app.

Best Practices for API Rate Limiting

Rate limiting is powerful. But you must configure it wisely.

1. Know Your Traffic Patterns

Study normal usage. Set limits slightly above typical peaks.

2. Separate Limits by Tier

Free users and paid users should not have the same limits.

3. Protect Sensitive Endpoints First

Login. Password reset. Payment processing.

4. Provide Clear Error Messages

Tell users when they exceeded limits. Include retry timing.

5. Monitor and Adjust

Traffic evolves. Your rules should too.

Common Mistakes to Avoid

- Setting limits too low and frustrating real users

- Not testing limits under real load

- Ignoring internal service to service traffic

- Failing to log rejected requests

Rate limiting should protect users. Not punish them.

The Future of API Traffic Management

APIs are growing fast. Microservices. Mobile apps. AI integrations. IoT devices.

That means more traffic. More complexity. More risk.

Modern platforms are adding:

- AI-driven anomaly detection

- Dynamic rate limits based on behavior

- Usage-based billing integration

NGINX continues to evolve too. Especially in cloud-native and Kubernetes environments.

The goal stays the same.

Keep APIs fast. Safe. Reliable.

Final Thoughts

APIs are like digital highways. Without traffic control, they clog instantly.

API rate limiting platforms like NGINX provide that control.

They prevent overload. Improve security. Ensure fairness. And protect your infrastructure.

The concept is simple. Limit how much comes in. Control the flow. Protect the system.

Whether you run a small startup API or a global enterprise platform, rate limiting is not optional anymore.

It’s essential.

Because on the internet, traffic never sleeps.

And neither should your traffic control.